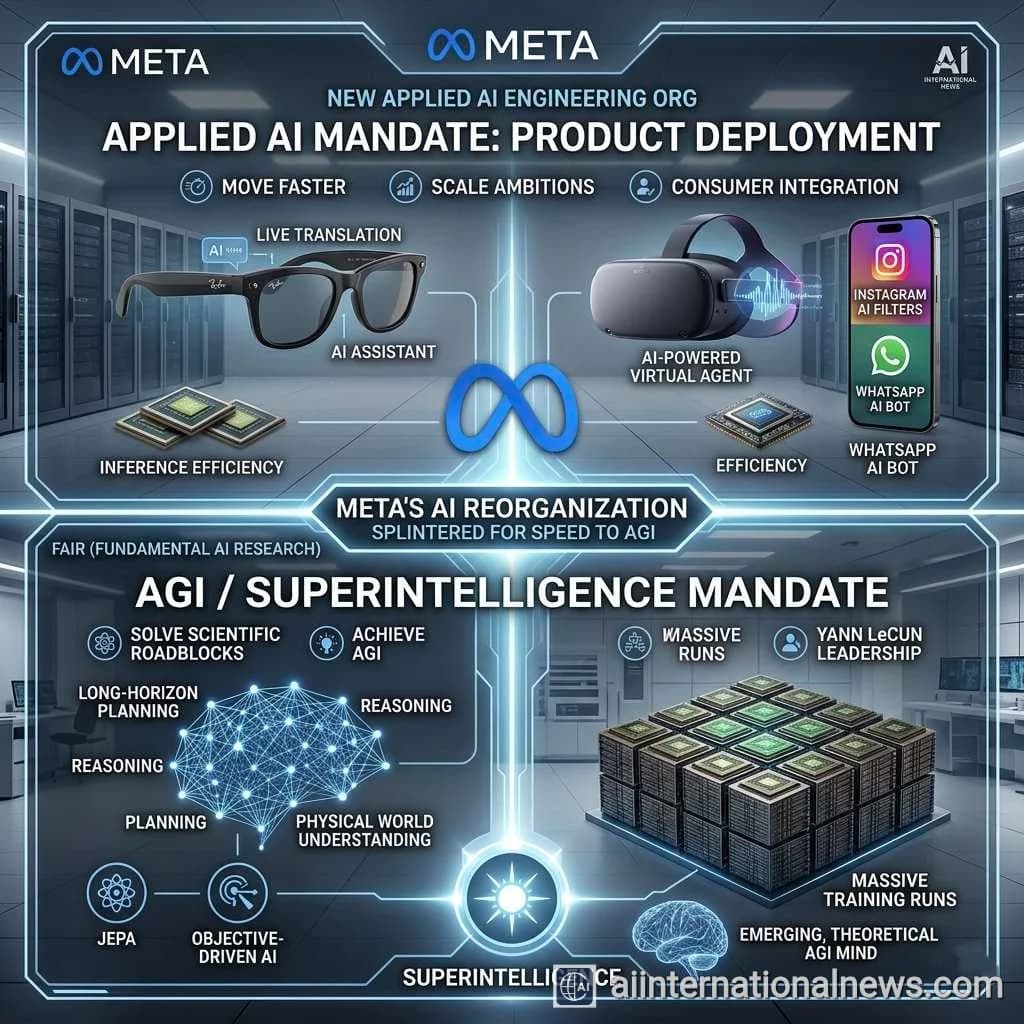

BREAKING: Meta CEO Mark Zuckerberg has announced a sweeping reorganization of the company's artificial intelligence divisions, establishing a brand-new applied AI engineering organization. This strategic pivot splits Meta's AI talent into specialized factions, designed to ruthlessly accelerate the deployment of generative models across its ecosystem while freeing up core researchers to pursue the ultimate holy grail: Artificial General Intelligence (AGI) and superintelligence.

As the arms race for artificial intelligence reaches a fever pitch in 2026, Meta is fundamentally restructuring its battle lines. Mark Zuckerberg, having successfully navigated the company through the turbulent waters of the early Metaverse pivot, is now setting his sights on the most ambitious technological milestone in human history. By splintering Meta's AI operations into distinct, highly focused units, the tech behemoth is signaling a transition from pure academic research to aggressive, full-scale commercial deployment, all while keeping one eye fixed firmly on the horizon of superintelligence.

For years, Meta's AI efforts have been a massive, somewhat homogenized engine. Now, recognizing that the skills required to invent a frontier model are vastly different from the skills required to integrate that model into a pair of smart glasses or a WhatsApp chatbot, Zuckerberg is unbundling the talent. This new applied AI engineering organization is tasked with taking the raw, intellectual horsepower generated by Meta's foundational researchers and forging it into consumer-ready products that touch billions of lives daily. The reorganization is not merely an administrative shuffle; it is a declaration of war against tech stagnation and a roadmap for how Meta plans to dominate the next decade of digital interaction.

The Architecture of Ambition: Why Splinter the AI Division?

To understand the necessity of this radical restructuring, one must look at the inherent tension that exists within any mega-corporation attempting to push the boundaries of frontier science. On one side, you have the visionaries—researchers who need the freedom, compute power, and time to experiment with novel neural architectures, long-horizon planning, and reasoning capabilities. On the other side, you have product managers and engineers who are beholden to quarterly earnings, user engagement metrics, and seamless UI/UX integration. When these two factions are forced to operate under a single, unified mandate, bureaucracy inevitably throttles innovation.

Zuckerberg's decision to cleave the organization in two solves this bottleneck. By establishing a dedicated applied AI team, he is effectively creating an agile startup within the trillion-dollar corporation. This team's sole mandate is velocity. They are not tasked with writing academic papers for NeurIPS; they are tasked with ensuring that when a user asks their Ray-Ban Meta glasses to translate a menu in Tokyo or identify a landmark in Paris, the response is instantaneous, accurate, and contextually perfect. This requires specialized engineering focused on latency reduction, model quantization, and edge computing.

FAIR vs. Applied AI: The Two-Pronged Spear

At the core of this strategy is the preservation and elevation of FAIR (Fundamental AI Research), the legendary division founded by Chief AI Scientist Yann LeCun in 2013. For the past decade, FAIR has operated almost like an open-source university embedded within a social media giant. Under the new organizational structure, FAIR is liberated from the daily grind of product integration. Their mandate has been clarified and amplified: solve the foundational roadblocks preventing Artificial General Intelligence.

While the applied AI team iterates on current-generation Llama models to boost ad targeting and content recommendation algorithms, FAIR is looking years into the future. LeCun has been vocal about the limitations of current Large Language Models (LLMs), arguing that autoregressive prediction is not true intelligence. FAIR is now fully unleashed to explore Objective-Driven AI, Joint Embedding Predictive Architecture (JEPA), and multimodal systems that can reason, plan, and understand the physical world much like a human does. By splitting the teams, Zuckerberg ensures that the quest for AGI is not compromised by the immediate need to ship software updates.

Abhijeet's Take: Zuckerberg is executing the most ruthless and brilliant organizational decoupling we've seen since Google created Alphabet. The problem with massive AI labs like DeepMind or OpenAI is that as they get closer to commercializing their products, their foundational research slows down. The applied product teams end up cannibalizing the compute resources and time of the visionary scientists. By creating a hard split between the 'Applied AI' organization and 'FAIR', Zuckerberg is allowing Meta to eat its cake and have it too. The applied team will act as a revenue engine, turning Llama variants into highly profitable features across Instagram, WhatsApp, and the Quest headsets. Meanwhile, FAIR is placed in a protective bubble, given a blank check and a mountain of H100s, and told not to come out until they have built superintelligence. It is a masterstroke in corporate agility that positions Meta to outmaneuver both Google's bureaucracy and OpenAI's internal safety politics.

The Open-Source Moat and the Llama Ecosystem

This organizational shift is inextricably linked to Meta's aggressive open-source strategy. By releasing the weights of its Llama models to the public, Meta has effectively commoditized the foundational model layer, forcing competitors like Google and OpenAI to constantly defend their closed-source ecosystems. However, an open-source model is only as valuable as the ecosystem built around it. This is where the new applied AI engineering team becomes Meta's most lethal weapon.

The applied team is positioned to take the open-source Llama frameworks and rapidly fine-tune them for highly specific, proprietary Meta use cases. Because the foundational model is open, external developers across the globe are constantly finding new ways to optimize it, fix bugs, and reduce inference costs. Meta's applied engineers can harvest this global, crowdsourced innovation and inject it directly into Meta's commercial products. This creates a feedback loop: FAIR builds the base model, the open-source community optimizes it, and the applied AI team monetizes it at an unprecedented scale.

Key Points: Meta's AI Reorganization

- New Applied AI Org: Meta has created a specialized applied AI engineering team focused solely on integrating generative AI into consumer products like WhatsApp, Instagram, and wearables.

- FAIR's Singular Focus: The Fundamental AI Research (FAIR) team, led by Yann LeCun, is now unburdened from product timelines, allowing them to focus entirely on foundational breakthroughs and Artificial General Intelligence (AGI).

- Agility at Scale: Splitting the teams prevents bureaucracy from throttling innovation, allowing Meta to ship consumer AI features faster while simultaneously pursuing long-term superintelligence.

- The Hardware Connection: The applied AI team will work closely with the Reality Labs division to integrate low-latency AI into physical devices, specifically the wildly successful Ray-Ban smart glasses.

- Compute Dominance: This structure is designed to maximize the ROI of Meta's massive stockpile of NVIDIA GPUs, ensuring compute is efficiently allocated between product inference and AGI training runs.

Hardware, Wearables, and the Ultimate AI Sandbox

Perhaps the most compelling reason for the creation of an applied AI team is Meta's hardware ambitions. The company has realized that the ultimate interface for superintelligence is not a text box in a web browser; it is immersive hardware. The unexpected, runaway success of the Ray-Ban Meta smart glasses has proven that consumers want AI that sees what they see and hears what they hear.

The applied AI engineering organization will work hand-in-glove with Reality Labs. Their objective is to shrink massive neural networks down to run on edge devices, manage battery consumption, and process multimodal inputs (live video and audio) with near-zero latency. When a user asks their glasses to remember where they parked their car or to provide real-time translation during a conversation, that is an applied AI engineering problem, not a foundational research problem. By establishing a team dedicated to these specific, real-world constraints, Meta is accelerating its vision of an AI assistant that serves as an ever-present, hyper-intelligent companion.

The Compute Engine: Fueling the Superintelligence Drive

None of this organizational restructuring matters without the underlying compute infrastructure. Zuckerberg has famously hoarded one of the largest private stockpiles of advanced silicon in the world, including hundreds of thousands of NVIDIA H100 GPUs, and is aggressively securing next-generation Blackwell chips. Splitting the AI division ensures that this compute is utilized efficiently.

The applied AI team will focus on inference efficiency—figuring out how to serve AI features to 3 billion daily active users without melting the data centers or bankrupting the company. They will pioneer techniques in model distillation, sparsity, and custom silicon (like Meta's MTIA chips). Meanwhile, FAIR will utilize the heavy-compute clusters for massive, months-long training runs designed to crack the code of superintelligence. This bifurcated approach to compute management is essential for a company attempting to dominate both the present and the future simultaneously.

Conclusion: A Leaner, Meaner Meta

Mark Zuckerberg's creation of a dedicated applied AI engineering organization is a clear signal that the era of AI experimentation is over; the era of AI execution has begun. By carefully separating the visionaries from the pragmatists, Meta is building a corporate structure capable of surviving the brutal, capital-intensive race toward AGI. The applied team will ensure that Meta's current apps remain indispensable and highly profitable, funding the multi-billion-dollar research required by FAIR. As the company pushes relentlessly toward superintelligence, this leaner, more specialized organizational structure may just be the competitive advantage that allows Meta to cross the finish line first.

Frequently Asked Questions

Why did Mark Zuckerberg split Meta's AI division?

Zuckerberg split the division to increase agility and focus. By creating a dedicated 'Applied AI' team, Meta can rapidly integrate AI features into products like Instagram and Ray-Ban glasses without distracting the core researchers at FAIR (Fundamental AI Research), who are tasked with solving the long-term scientific challenges required to achieve Artificial General Intelligence (AGI).

What is the difference between FAIR and the new Applied AI team?

FAIR, led by Yann LeCun, is focused on long-horizon, foundational scientific breakthroughs—effectively inventing the future of superintelligence. The new Applied AI engineering team is focused on practical execution—taking those foundational models (like Llama), optimizing them for speed and cost, and shipping them to billions of consumers across Meta's apps and hardware devices.

How does this reorganization affect Meta's open-source strategy?

It strengthens it. While FAIR continues to develop and open-source the base Llama models for the global community, the new Applied AI team is specifically structured to take those open models, harvest the community's innovations, and rapidly fine-tune them into proprietary, revenue-generating features for Meta's closed ecosystem of apps and smart glasses.