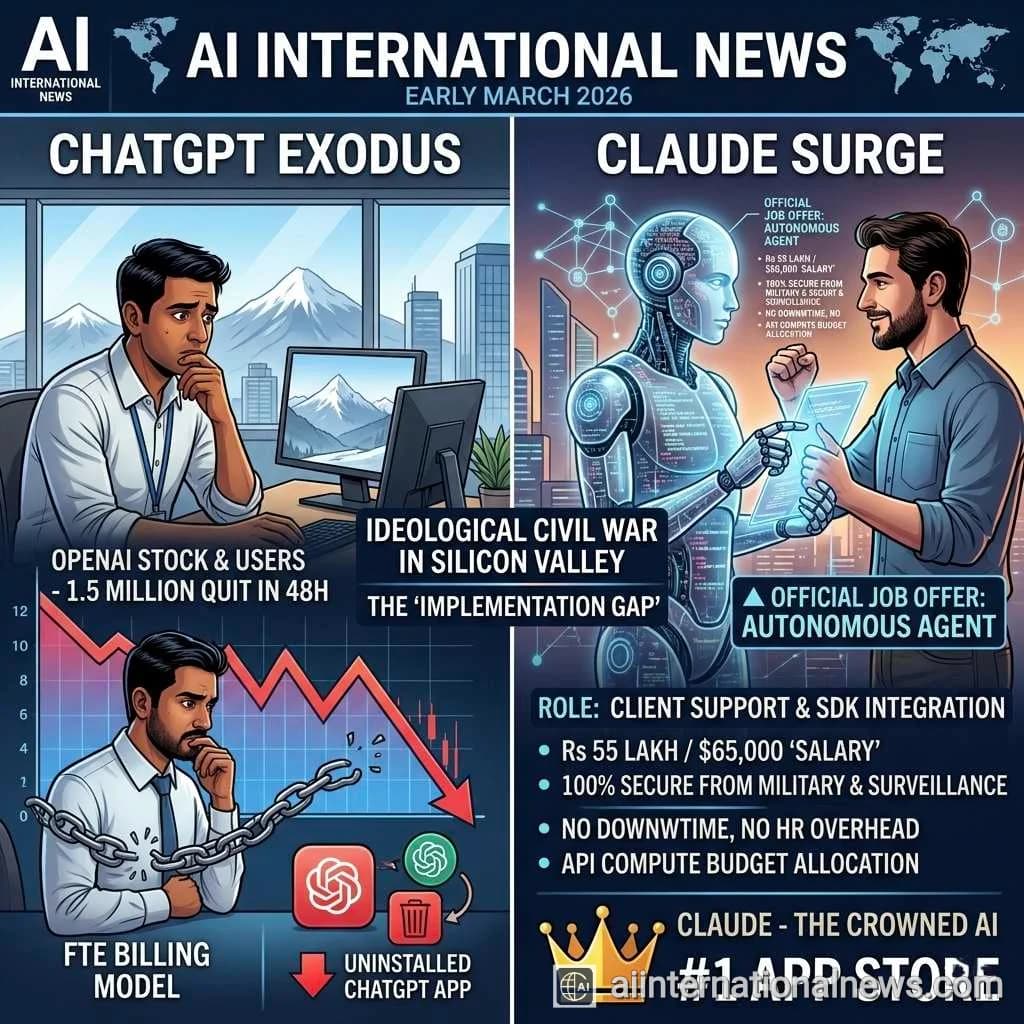

BREAKING: In an unprecedented consumer revolt that is reshaping the artificial intelligence landscape, OpenAI has reportedly lost over 1.5 million ChatGPT subscribers in less than 48 hours. The mass exodus was triggered by CEO Sam Altman's decision to sign a highly controversial contract granting the US Department of Defense and the Pentagon access to its AI models on a classified government network. Concurrently, rival firm Anthropic—which explicitly rejected the same Pentagon deal on ethical grounds—has seen its Claude chatbot surge to the number one spot on the Apple App Store, completely dethroning ChatGPT amid a massive wave of public support.

As we navigate the tense geopolitical and technological climate of March 2026, an ideological civil war has officially erupted within Silicon Valley, forcing consumers to vote with their wallets on the future of militarized artificial intelligence. For years, the major AI labs have walked a delicate tightrope, promising to build Artificial General Intelligence (AGI) for the "benefit of all humanity" while quietly courting lucrative defense contracts. This week, that tightrope snapped. A stark, undeniable dividing line has been drawn between the industry's two biggest heavyweights: OpenAI and Anthropic. The resulting fallout has triggered the single largest and fastest migration of active users in the history of the generative AI market.

According to data from independent boycott-tracking websites and app analytics firms, OpenAI's capitulation to the Trump administration's Department of Defense has resulted in a staggering 295% surge in ChatGPT app uninstalls. Simultaneously, Anthropic's Claude platform is experiencing what the company describes as "unprecedented demand." Daily signups for Claude have quadrupled, and free active users have increased by more than 60% in a matter of days. The sudden, massive influx of traffic even caused widespread server outages for Claude early Monday morning, with over 1,400 disruption reports logged on Downdetector as the startup scrambled to scale its infrastructure to accommodate the digital refugees fleeing OpenAI.

The Catalyst: The Pentagon Contract and the "Supply-Chain Risk"

To understand the sheer scale of this public backlash, one must examine the chain of events that unfolded in late February. The US Department of Defense, under the direction of Defense Secretary Pete Hegseth, approached Anthropic to integrate its highly capable Claude model into classified military networks. Anthropic, which currently holds a $200 million military contract for non-lethal, administrative workloads, drew a hard line in the sand during negotiations. CEO Dario Amodei and his leadership team absolutely refused to waive their core ethical safety guardrails, which explicitly prohibit their models from being used for mass domestic surveillance or fully autonomous lethal weapons systems.

The Pentagon balked at these restrictions. In a stunning display of political retaliation, the Trump administration formally designated Anthropic a "supply-chain risk" to national security—a severe label historically reserved for hostile foreign adversaries like Huawei or ZTE, not American tech pioneers. President Donald Trump took to his Truth Social platform to blast the company, stating, "The Leftwing nut jobs at Anthropic have made a DISASTROUS MISTAKE trying to STRONG-ARM the Pentagon, and force them to obey their Terms of Service instead of our Constitution."

Within hours of Anthropic walking away from the table, OpenAI CEO Sam Altman swooped in to fill the void, announcing that his company had struck a sweeping deal with the federal government to deploy its AI technology in secure, classified systems. While OpenAI released a blog post claiming the contract allows AI use only for "all lawful purposes" and excludes domestic surveillance, critics and AI ethicists immediately raised the alarm, pointing out that "lawful purposes" is a highly malleable term in the context of classified warfare and international espionage.

Sam Altman vs. Dario Amodei: An Ideological Civil War

The business rivalry between OpenAI and Anthropic—a company founded by former OpenAI researchers who left over safety concerns—has now evolved into deeply personal, public warfare. Following the announcement of the OpenAI-Pentagon deal, Anthropic CEO Dario Amodei sent a scathing internal memo to his employees that subsequently leaked to the press. In the memo, Amodei described the OpenAI deal as a fundamental "safety threat" to the global public.

Amodei did not hold back, explicitly accusing Sam Altman of misinterpreting the terms of the contract and calling his public messaging "straight up lies." Amodei wrote that the main reason OpenAI accepted the Pentagon's terms while Anthropic did not was because "they cared about placating employees, and we actually cared about preventing abuses." He further argued that Altman was falsely presenting himself as a "peacemaker and dealmaker," while warning his staff that OpenAI's spin might sway "some Twitter morons," but the broader public views the OpenAI deal as "sketchy or suspicious" and sees Anthropic as the principled alternative.

This stark contrast in corporate philosophy has resonated deeply with the public. The #BoycottOpenAI movement has gained massive traction across social media platforms. High-profile celebrities, including pop singer Katy Perry, have publicly announced their switch to Anthropic, urging their millions of followers to cancel their ChatGPT Plus subscriptions and stop funding the militarization of artificial intelligence.

Abhijeet's Take: We are witnessing the birth of a new corporate currency in the AI era: ethical trust. For the past two years, the AI race has been solely about benchmark scores and compute power. OpenAI was the undisputed king because GPT-4 was simply the most capable model. But as frontier models achieve parity—Claude is now arguably as smart, if not smarter, than ChatGPT—consumers are no longer choosing based on capability alone; they are choosing based on alignment. Sam Altman made a classic enterprise miscalculation: he assumed the multi-billion dollar Pentagon contract was worth the PR hit. But losing 1.5 million paying subscribers in 48 hours isn't just a PR hit; it's a structural collapse of consumer trust. Anthropic's willingness to look the US Military in the eye and say 'No' to autonomous weapons has instantly established Claude as the sovereign, neutral architecture for the global internet. OpenAI may have won the Pentagon, but Anthropic just won the people.

Big Tech Rebels: Microsoft, Google, and Amazon Defy the Blacklist

Adding a massive layer of complexity to this unfolding drama is the reaction of the broader tech ecosystem. When the Pentagon labeled Anthropic a "supply-chain risk," there were initial fears that the startup would be completely excommunicated from the US tech sector. However, in a stunning rebuke of the administration's aggressive tactics, the industry's biggest cloud providers—Microsoft, Amazon, and Google—have all publicly rallied behind Anthropic.

Microsoft became the first mega-cap tech firm to explicitly state that it was not abandoning Anthropic. Despite Microsoft's deep, multi-billion dollar partnership with OpenAI, a Microsoft spokesperson confirmed to CNBC that their legal team had reviewed the Pentagon's designation. "Our lawyers have studied the designation and have concluded that Anthropic products, including Claude, can remain available to our customers—other than the Department of War—through platforms such as M365, GitHub, and Microsoft's AI Foundry," the company stated.

Google and Amazon Web Services (AWS) quickly followed suit. A Google spokesperson confirmed that their products remain available through platforms like Google Cloud, clarifying that the Pentagon's determination does not preclude them from working with Anthropic on non-defense related projects. This unified front by Big Tech essentially defangs the "supply-chain risk" label, ensuring that Anthropic remains a dominant force in the commercial enterprise sector even as it is locked out of classified government servers.

The Financial and Strategic Implications of the Exodus

The financial ramifications of this 48-hour period are staggering. While OpenAI has not publicly confirmed the exact churn numbers, a loss of 1.5 million premium subscribers equates to roughly $30 million in lost Monthly Recurring Revenue (MRR), or $360 million annualized. More importantly, it signals a massive shift in developer sentiment. OpenAI's ecosystem relies heavily on developers building applications on top of its APIs. If consumer sentiment turns toxic against the underlying foundational model, enterprise developers will inevitably migrate to "brand-safe" alternatives like Claude to protect their own user bases.

Conversely, Anthropic is now facing the best kind of existential crisis: hyper-growth. Climbing to the #1 spot on the Apple App Store brings an onslaught of mainstream users who have never previously interacted with Claude. The company's immediate challenge is no longer customer acquisition, but rather infrastructure scaling and compute management to prevent further outages while maintaining the high quality of its model outputs.

Key Points: The OpenAI to Anthropic Migration

- Mass Exodus: Over 1.5 million users canceled their ChatGPT subscriptions in under 48 hours following OpenAI's classified contract with the Pentagon.

- Claude's Ascendancy: Anthropic's Claude chatbot surged to the #1 position on the Apple App Store, completely dethroning ChatGPT amid unprecedented public demand and quadrupled daily signups.

- The Ethical Divide: The migration was triggered after Anthropic refused to grant the US military unrestricted access to its models for autonomous weapons and mass surveillance, leading the Pentagon to label the firm a "supply-chain risk."

- CEO War of Words: Anthropic CEO Dario Amodei sent a leaked memo accusing OpenAI CEO Sam Altman of "straight up lies" and prioritizing employee pacification over preventing AI abuse.

- Big Tech Defiance: Microsoft, Google, and Amazon defied the spirit of the Pentagon's blacklist, officially confirming they will continue to offer Anthropic's Claude to their non-defense commercial cloud customers.

- Celebrity Influence: The #BoycottOpenAI movement gained massive mainstream traction, bolstered by public endorsements of Claude from celebrities like Katy Perry.

The Future of "Patriotic AI" vs. "Principled AI"

As the dust settles on this historic week in tech, the landscape of artificial intelligence is forever fractured. The US government is now scrambling to draft strict new AI guidelines for civilian contracts, attempting to mandate that tech companies permit "any lawful" use of their models and avoid partisan judgments. Meanwhile, OpenAI is quietly revising its agreement with the Pentagon to clarify language and quell the public relations disaster, though the damage to its consumer brand may already be irreversible.

Conclusion: A Watershed Moment in Technology

The events of early March 2026 prove that artificial intelligence is no longer just a fascinating technological tool; it is a profound moral actor in global society. Consumers are acutely aware of the existential risks posed by AI, and they are demanding accountability from the corporations that build it. By choosing to walk away from the world's largest military budget in defense of its ethical guardrails, Anthropic has achieved something money cannot buy: the implicit trust of the public. As Claude solidifies its position as the world's top AI app, the message to Silicon Valley is clear—in the race to build superintelligence, ethics are no longer an optional feature; they are the core product.

Frequently Asked Questions

Why are people uninstalling ChatGPT and switching to Claude?

A massive boycott began after OpenAI CEO Sam Altman signed an agreement to deploy OpenAI's models on classified Pentagon networks. Users are angry that OpenAI is aiding military applications. They are migrating to Anthropic's Claude because Anthropic recently refused the same Pentagon deal, explicitly refusing to allow its AI to be used for autonomous weapons or mass domestic surveillance.

What does it mean that Anthropic is a "supply-chain risk"?

After Anthropic refused the military's terms, the US Department of Defense officially labeled the company a "supply-chain risk." This is a severe national security designation usually used for hostile foreign companies. It legally bars the Pentagon from using Anthropic's technology and requires defense contractors to phase out Claude from their systems within six months.

Will I still be able to use Claude on Microsoft or Google platforms?

Yes. Despite the Pentagon's blacklist, major cloud providers including Microsoft, Google, and Amazon have publicly stated that their legal teams reviewed the order. They confirmed that they will continue to host and offer Anthropic's Claude AI for all of their commercial and non-defense customers worldwide.