BREAKING: In a historic and highly controversial move, OpenAI CEO Sam Altman has announced a sweeping agreement to deploy the company's frontier AI models within the classified networks of the US Department of War. The deal, which includes explicit "technical safeguards," comes mere hours after the Trump administration blacklisted rival AI firm Anthropic for refusing similar military integration, marking a seismic shift in the relationship between Silicon Valley and the US military-industrial complex.

The Dawn of a New Military-Tech Paradigm: The 2026 AI Arms Race

As the artificial intelligence landscape hurtles through early 2026, the intersection of national security and generative AI has become the defining battleground of the decade. The recent announcement by OpenAI CEO Sam Altman that his company has secured a critical defense contract with the US Department of War (the administration's preferred nomenclature for the Department of Defense)—deploying cutting-edge AI models directly into classified military networks—is a watershed moment. This is not merely a software procurement deal; it is a fundamental realignment of how the United States military intends to maintain technological supremacy in an increasingly volatile geopolitical climate.

For years, the Pentagon has courted Silicon Valley's brightest minds, only to be met with internal employee protests and corporate hesitation. However, the dynamics have radically shifted. OpenAI's willingness to cross this threshold, albeit with self-imposed constraints, signals that the era of tech companies holding the military at arm's length is officially over. The geopolitical reality of near-peer adversaries rapidly advancing their own AI capabilities has catalyzed a newfound urgency within the halls of the Pentagon, and OpenAI has positioned itself as the primary architect of America's AI defense infrastructure.

The Anthropic Ultimatum and the "Supply-Chain Risk" Designation

To understand the magnitude of OpenAI's deal, one must examine the chaotic 48 hours that preceded it. The Pentagon had been engaged in a prolonged, high-stakes negotiation with Anthropic, the makers of the Claude AI models. Anthropic drew a hard line in the sand, refusing to grant the military unrestricted "all lawful uses" access to their models. They demanded explicit contractual exemptions prohibiting the use of Claude for domestic mass surveillance and fully autonomous lethal weapon systems.

The Trump administration's response was swift and unprecedented. Rejecting Anthropic's terms, Defense Secretary Pete Hegseth officially designated the American AI startup a "supply-chain risk to national security." President Donald Trump followed up on Truth Social, labeling Anthropic a "radical left, woke company" and ordering every federal agency to immediately cease the use of Anthropic's technology, initiating a six-month phase-out period.

Inside OpenAI's "Technical Safeguards": A Compromise or a Loophole?

Mere hours after Anthropic was effectively banished, Sam Altman announced that OpenAI had successfully finalized a deal with the Pentagon. Altman revealed that OpenAI had secured the contract while maintaining its core safety principles, specifically noting that the Department of War had agreed to prohibitions on domestic mass surveillance and autonomous weapon systems.

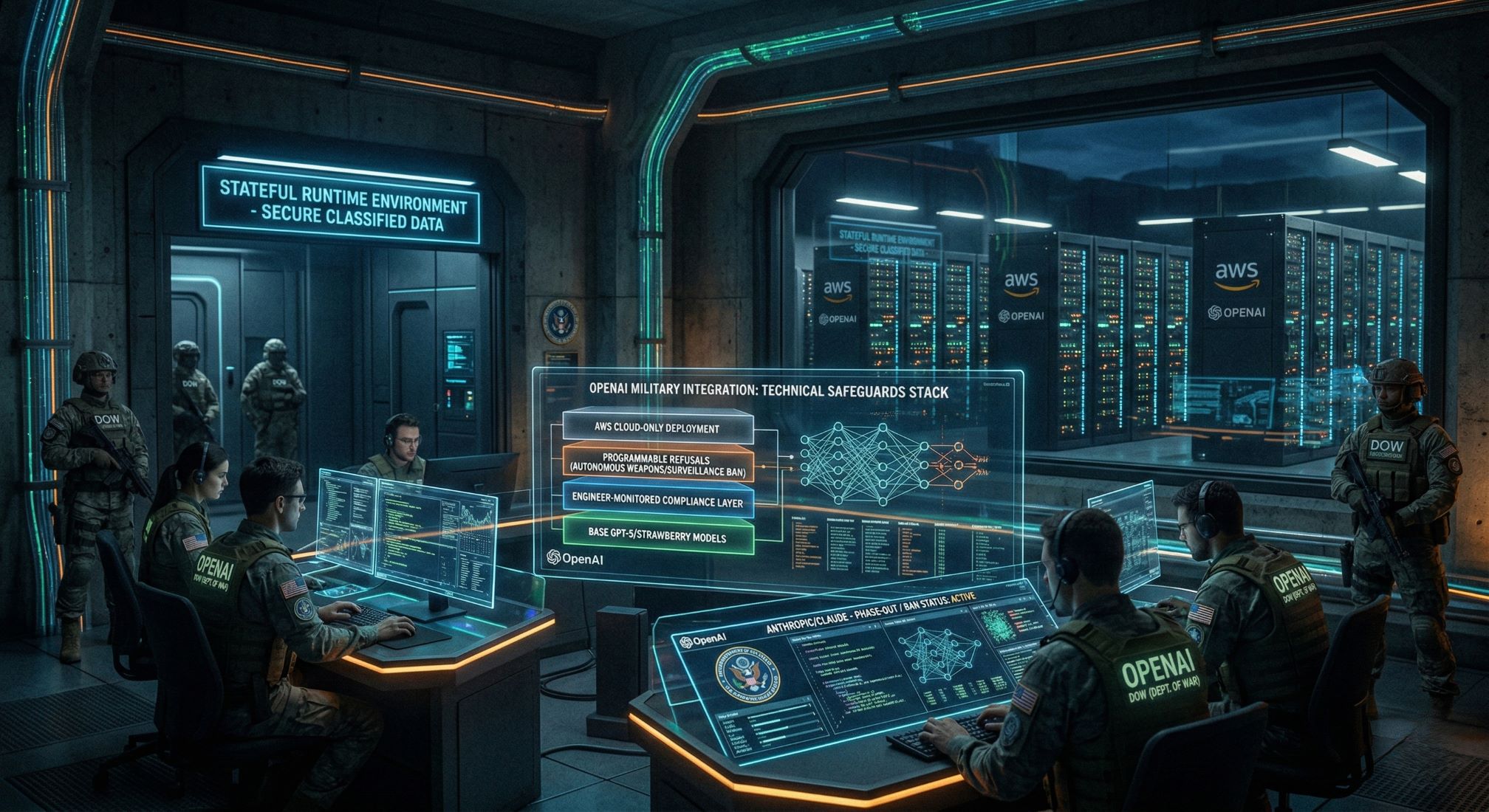

The crux of this agreement lies in the "technical safeguards" that OpenAI will implement. Unlike Anthropic, which sought ironclad legal and contractual language restricting the military, OpenAI opted for an engineering-first approach to compliance. According to internal memos, OpenAI will build a proprietary "safety stack"—a layer of technical infrastructure designed to monitor and constrain model behavior within the classified network. This ensures that if the military attempts to prompt the AI for prohibited use cases, the model will technically refuse the request.

Abhijeet's Take: Sam Altman has pulled off a masterful political maneuver. By securing the exact ethical concessions that got Anthropic blacklisted—but framing them as "technical safeguards" rather than "contractual demands"—OpenAI has cemented a quasi-monopoly over the US government's frontier AI infrastructure. However, this is a dangerous tightrope. The technical safeguards rely heavily on cloud-tethering and OpenAI's proprietary safety stack. In the fog of war, or under immense national security pressure, will these software constraints hold? OpenAI isn't just winning a contract; they are writing the foundational rules of engagement for algorithmic warfare.

The $110 Billion War Chest and the Amazon Alliance

The geopolitical drama coincided with another monumental announcement: OpenAI is closing a blockbuster $110 billion funding round, with a massive $50 billion coming from Amazon. This strategic partnership with Amazon Web Services (AWS) is inextricably linked to the Pentagon deal. The US military relies heavily on AWS for its classified cloud computing infrastructure. By aligning with Amazon, OpenAI gains native access to the very cloud architecture the Pentagon uses, facilitating the implementation of the "cloud-only" technical safeguards Altman promised. Together, they are developing a "Stateful Runtime Environment" that allows AI systems to retain context and manage ongoing workflows within secure military networks.

Key Points on the OpenAI-Pentagon Deal

- The Catalyst: The Trump administration blacklisted Anthropic, labeling them a "supply-chain risk" after they refused unrestricted military use of their AI.

- The Agreement: OpenAI CEO Sam Altman announced a deal to deploy AI models in the Pentagon's classified network shortly after the Anthropic ban.

- Technical Safeguards: OpenAI is implementing a proprietary "safety stack," cloud-only deployment, and field engineers to prevent use in autonomous weapons or mass surveillance.

- Industry Backlash: Nearly 500 tech employees signed an open letter protesting the Pentagon's divide-and-conquer tactics.

- The Funding: The deal aligns with OpenAI's $110 billion funding round, led by a $50 billion investment from Amazon.

FAQ: Why was Anthropic banned by the Pentagon?

The government designated Anthropic a "supply-chain risk" because the company refused to allow unrestricted military use of its AI, specifically insisting on bans for domestic surveillance and autonomous lethal weapons.

FAQ: What are the "technical safeguards" OpenAI is using?

Instead of relying purely on contracts, OpenAI is using a "safety stack"—software layers that programmatically prevent the AI from following prohibited prompts—and restricting the AI to cloud-only environments.

FAQ: How does the Amazon deal impact this military contract?

Amazon's $50 billion investment into OpenAI deepens their partnership with AWS. Since the Pentagon uses AWS for classified cloud data, this allows OpenAI to integrate its models directly where the military already operates.